I have been working with NetFlow and other forms of flow information for quite some time now and have been struggling with how to get the right flows to the right tools. Within our environment, not only do I deal with have large flow counts and sources but different tools that require different flow information. The tool that we use within our Orion environment flow is NetFlow Traffic Analyzer (NTA)

I wanted to share my solution with the community, as many of you have given me ideas and direction.

So here it is.

Requirement –

- Configure devices to provide flow data with a minimal impact to the device

- To present select flow data to multiple tools

Sounds easy enough but let’s take a closer at some of the issues that have cropped up for me.

First, device configuration, as many you may know most devices today support some type of flow information (NetFlow, SFlow, JFlow, IPFIX, etc.) But there are some limitations within some device platforms. As an example if you configure NetFlow on Cisco device with multiple flow export destinations you can put too much load on the device, to the point that it unable to perform.

So how do you send the same flow to multiple tools?

We used Samplicate https://github.com/sleinen/samplicator. This program listens for UDP datagrams on a network port, and sends copies of these datagrams on to a set of destinations. Optionally, it can perform sampling, i.e. rather than forwarding every packet, forward only 1 in N. Another option is that it can 'spoof' the IP source address, so that the copies appear to come from the original source, rather than the relay (Good for Orion NTA). We run this on a VM instance of Ubuntu, you can use any support Linux platform. There are several options on how traffic is handled by the application. You should choose what is needed for your environment.

Now this is all straight forward, the same flow in, then out to Tool A / Tool B / Tool C. But here is the “rub”, Tool A only wants NetFlow and IPFIX, Tool B only wants SFlow from the Load Balancers, and Tool C wants it all. And of course Tool B can only receive on port 2055 while Tool C can only receive on port 5502. You can start to see the configuration issues at the device level.

We came up a port scheme something like;

FLOW PORTS –

9995 - SteelFlow

9996 - F5 SFlow

9997 - Cisco NetFlow / IPFIX

9998 - Cisco SFlow

9999 - BlueCoat

You look at this and say this is too complicated and I would tend to agree with you if, there were not so many tools needing different flows. Again this is the beauty of Samplicate; it can take the UDP traffic on one port and change to another port. So the F5 SFlow traffic comes in on port 9996 but is presented to ToolB on port 2055 and to ToolC on 5502. Why a different port for each type of flow? With this type of setup I can control what tool sees what traffic and how.

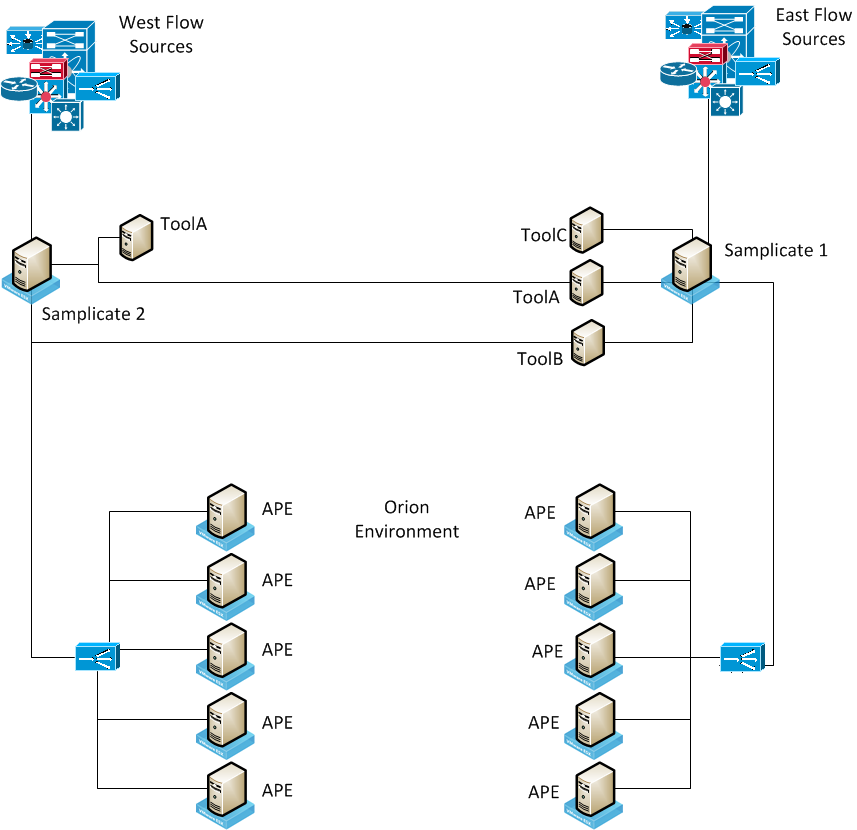

We also have two instances of Samplicate. We are a global company so we “divide” our world at the Mississippi River. East of the River and EMEA go to instance 1 and west of the River and APAC go to instance 2.

That takes care of directing the traffic to the needed tool.

My next issue was within my Orion Environment. A quick overview of my environment I have 1 Primary Poller, 10 Additional Pollers (APE) and 2 Additional Web Servers (AWS).

All this flow data going to one box, I was killing that poller. I needed to have the traffic “balanced” across multiple APEs. Within my environment we have multiple F5 LTMs after working my F5 team we were able to come up with a working VIP for the flows going to the Orion environment. That configuration took a bit of trial and error but we got there in the end. The primary issue was that F5 would not honor the “spoof” from the Samplicate boxes. The F5 saw the flows coming from the Samplicate and not form the different devices.

An iRule configuration was needed.

On the Pool we changed the Allow SNAT to No

On the Virtual Server we changed the Source Address Translation to Auto Map and added the iRule

when CLIENT_ACCEPTED {

snat [IP::client_addr]

}

So the final hurdle was overcome. I can direct the required flows to the required tool on the required port and I am able to balance the load of the flows across my polling engines for NTA.

To sum up

- Devices east of the Mississippi send flows to instance 1 of Samplicate and devices west send to instance 2 of Samplicate

- – Yes two different configs but not 5 or 6 and these configurations are managed via NCM

- Though the use of Samplicate we are able to direct flow traffic to the tool that needs it and on the port required.

- Configured Samplicate to “spoof” with the original flow source

- With the use of VIPs (F5 environment) we are able to load balance the traffic across multiple APEs.

I hope this is of use to you and points you the right direction.

Thank you to everyone who was able to help me this with this project.

Disclaimer:

Please note, any custom scripts or other content posted herein are provided as a suggestion or recommendation to you for your internal use. This is not part of the SolarWinds software that you have purchased from SolarWinds, and the information set forth herein may come from third party customers. Your organization should internally review and assess to what extent, if any, such custom scripts or recommendations will be incorporated into your environment. Any custom scripts obtained herein are provided to you “AS IS” without indemnification, support, or warranty of any kind, express or implied. You elect to utilize the custom scripts at your own risk, and you will be solely responsible for the incorporation of the same, if any.