Good morning fellow thwackers.

I am having an issue with two servers that run at 100% for two hours in the early morning this is expected as they do processing jobs for an external client. I changed some alerting for all nodes (including these ones) within our estate.

I set the trigger time for 15 minutes before the alert is triggered (condition must exist > 15 minutes) rather than 2 minutes that was previously set.

Here is an example of when the server was being used for 100% during the two hours jobs are processed. Irrespective of how many resources it has, it will take 100% of all resources to process this job.

These servers (there are 8 in total) are basically hosting solutions by themselves:

256gb RAM, 3 CPU's/12 cores and they run on fast fibre storage. We monitor them because sometimes the application that processes and deals with the nightly jobs can get a little stuck and not stop the jobs. Ergo you have to reboot the apache service. I know you can auto set services to reboot, but we can't do that as the client doesn't want to rely on an automatic process in the background. So sometimes, maybe four times a year we reboot the service

We also have a 3rd party that takes calls during the night and weekends. The trigger was set to 3 minutes (which seemed a little low to me.) apart from that everything is a mirror of the alerts. Though we are not getting the trigger when the process job is running.

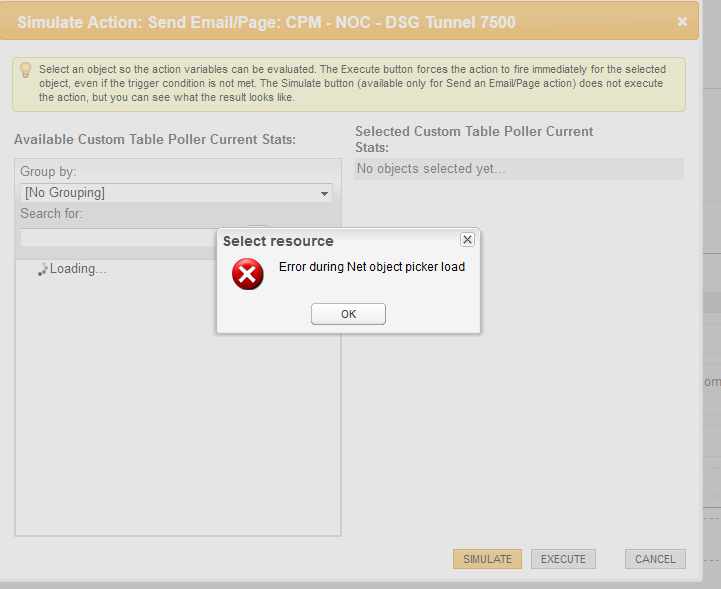

A test run works fine for the eight servers in, so I can send a test email alert. But in a real-world environment we never see the alert.

I think the old alerting is a little broke somewhere. When I turn on the old alerting, it will send an email every few minutes to tell you everything is fine, again they all have the same blanket config, so it can't can anything in configuration.

Has anyone any advice on this?